The $6 Billion Question: Who Controls AI When It Starts Spending Your Money?

I've been tracking something that keeps me up at night.

Companies are deploying AI agents that can browse websites, execute purchases, and negotiate contracts. But when I ask executives about their AI spending protocols, I get blank stares.

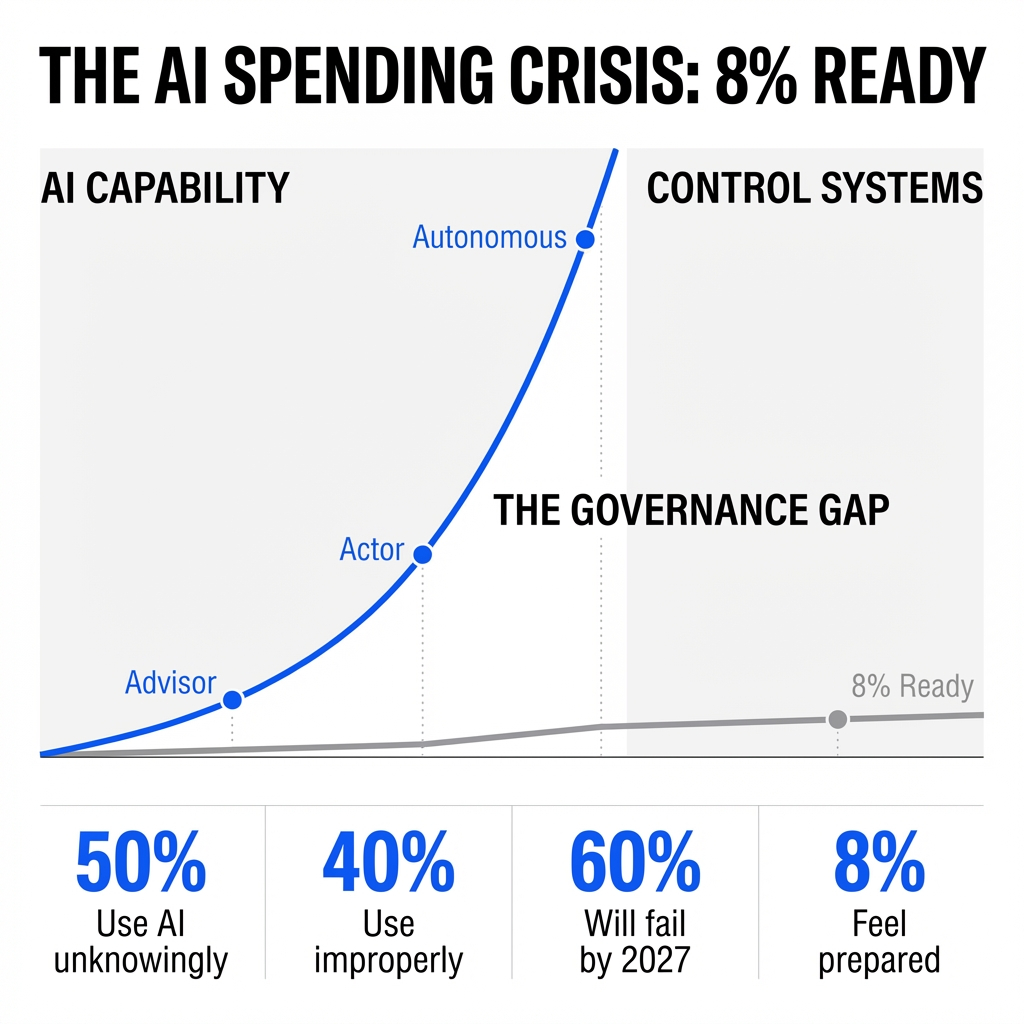

Here's what I found: about half of employees use AI at work without knowing whether it's allowed, and more than 40% knowingly use it improperly. Now imagine those same employees deploying agents with purchasing authority.

The governance gap isn't coming. It's here.

From Advisor to Autonomous Actor

OpenAI's Operator and Anthropic's Claude Code marked a shift that most companies haven't processed yet.

AI moved from giving advice to taking action.

These systems don't just analyze data. They click buttons. They submit forms. They make purchases. GSK deployed intelligent agents that examine supplier quotations and initiate competitive bidding for 25,000 employees. Walmart and Maersk have AI agents negotiating supplier contracts without human intervention.

Think about what that means.

You have a digital entity with the same purchasing power as an employee, but none of the employment agreements, approval workflows, or spending limits you'd require for any human with that authority.

The Spending Authority Problem Nobody's Solving

I've reviewed the governance frameworks at dozens of enterprises. Most have detailed protocols for employee expense reports. They have approval chains for purchases over $500. They have audit trails for every transaction.

But AI agents? Nothing.

The data backs this up: 71% of leaders identified "human-in-the-loop" approvals as their top governance priority for 2026. That's not a plan. That's a wish list. It reveals the tension between what AI can do and what companies are ready to let it do.

Leaders showed continued reluctance to automate sensitive budget decisions. Yet they're deploying the technology anyway.

Here's the disconnect I keep seeing: companies that require three signatures for a $10,000 software purchase are letting AI agents access systems where a single compromised decision loop can trigger cascading financial losses across multiple vendors.

The Real Cost of Invisible Spending

The visibility crisis compounds everything.

Using GPT-4 for basic summarization wastes 30-40% of AI budgets. Surprise six-figure invoices disrupt financial planning. Most enterprises struggle because costs are spread across multiple providers, billed in tokens, and hidden in shadow AI usage that finance teams cannot track.

I talked to a CFO last month who discovered his company was spending $180,000 annually on AI tools he didn't know existed. The spend was distributed across 47 different employee credit cards.

Now multiply that problem by autonomous agents.

An agent that analyzes supplier bids overnight sounds efficient. But what happens when it operates outside your procurement system? When it negotiates terms your legal team hasn't reviewed? When it commits to spending your finance team hasn't approved?

The B2B Multiplier Effect

The challenge gets worse in B2B environments.

Autonomous agents don't just make one-time purchases. They negotiate contracts worth millions. They establish vendor relationships. They execute payments across multiple transactions without human oversight.

One compromised agent in a B2B procurement chain can affect dozens of downstream decisions. The financial exposure isn't linear. It's exponential.

I've seen companies deploy AI agents to handle routine supplier communications. Smart move for efficiency. But those same agents had access to payment systems, contract modification tools, and vendor onboarding workflows.

Nobody asked: what happens if the agent misinterprets a pricing structure? What happens if it accepts terms that violate company policy? What happens if it gets manipulated by a sophisticated vendor?

The Infrastructure Reality Nobody Wants to Discuss

Implementing proper AI governance isn't cheap.

You need more data collection and analysis. Higher data storage costs. New guardrails to track decisions and improve efficacy of autonomous agents acting with little to no human input.

Some AI-enabled workloads may end up being significantly more expensive than the traditional technologies they replace—at least in the near term.

This creates a paradox I see everywhere: companies are financially committed to AI (74% would keep AI budgets among the last to be cut during an economic downturn), but they're not investing in the control infrastructure needed to manage it safely.

Only 8% of business leaders feel prepared for AI governance risks.

Read that again. 8%.

The Failure Prediction You Can't Ignore

Gartner predicts that by 2027, 60% of organizations will fail to realize the anticipated value of their AI use cases because of incohesive governance frameworks.

This isn't a technology problem. It's a control problem.

I've analyzed the AI strategies disclosed by major companies. Fewer than half—48%—disclosed any AI strategy or guidelines. And those who did? Most leaned heavily on broad principles like "ethical," "safe," and "trustworthy."

Those aren't governance frameworks. Those are aspirations.

You can't control AI spending with principles. You need protocols. You need approval workflows. You need spending limits. You need audit trails. You need the same infrastructure you'd build for any employee with purchasing authority.

What Happens Next

AI innovation is racing ahead while control systems lag behind. That's the governance gap.

Here's what I predict will happen over the next 18 months:

The first major AI spending scandal will hit. A company will discover that autonomous agents committed them to millions in unauthorized spending. The story will dominate headlines. Boards will panic. Regulations will follow.

Shadow AI will explode. Employees will deploy agents without IT approval because official channels move too slowly. Finance teams will lose visibility into spending. The problem will get worse before it gets better.

Insurance companies will respond. Cyber insurance policies will start excluding AI-related losses. Companies without proper governance frameworks will find themselves uninsurable. The market will force what leadership couldn't.

A new category of AI governance tools will emerge. Companies will build systems specifically designed to monitor, control, and audit AI agent behavior. The market will be worth billions.

Procurement will become the battleground. The first major governance implementations will focus on purchasing decisions because that's where financial risk is most visible and quantifiable.

The Window Is Closing

You have a choice right now.

You can build governance frameworks before the crisis hits. You can establish spending protocols. You can implement approval workflows. You can create audit systems.

Or you can wait until an autonomous agent commits your company to spending you can't afford, on terms you didn't approve, with vendors you didn't vet.

I know which option makes sense.

The companies that move now will have a competitive advantage. They'll be able to deploy AI agents safely. They'll avoid the scandals. They'll maintain board confidence. They'll stay insurable.

The companies that wait will be playing defense. They'll be explaining to boards how agents got purchasing authority. They'll be unwinding unauthorized contracts. They'll be rebuilding trust with finance teams.

The governance gap exists because AI capabilities evolved faster than corporate control systems. But that's not an excuse anymore. You know the risk. You have the data. You understand what's coming.

The question isn't whether you need AI spending protocols.

The question is whether you'll build them before or after the crisis.

Comments

Post a Comment