Ireland's Legal Sector Faces the Agent Economy Head-On

I've been watching the Law Society Skillnet in Ireland roll out something that most professional training organizations are still debating in committee meetings.

They're running small, in-person workshops teaching solicitors how to use AI in their daily work. Not theoretical seminars about the future. Not vendor pitches disguised as education. Practical sessions on low-risk AI applications that lawyers can implement immediately.

The timing tells you everything you need to know about where we are in the AI adoption curve.

The Production Shift Is Already Happening

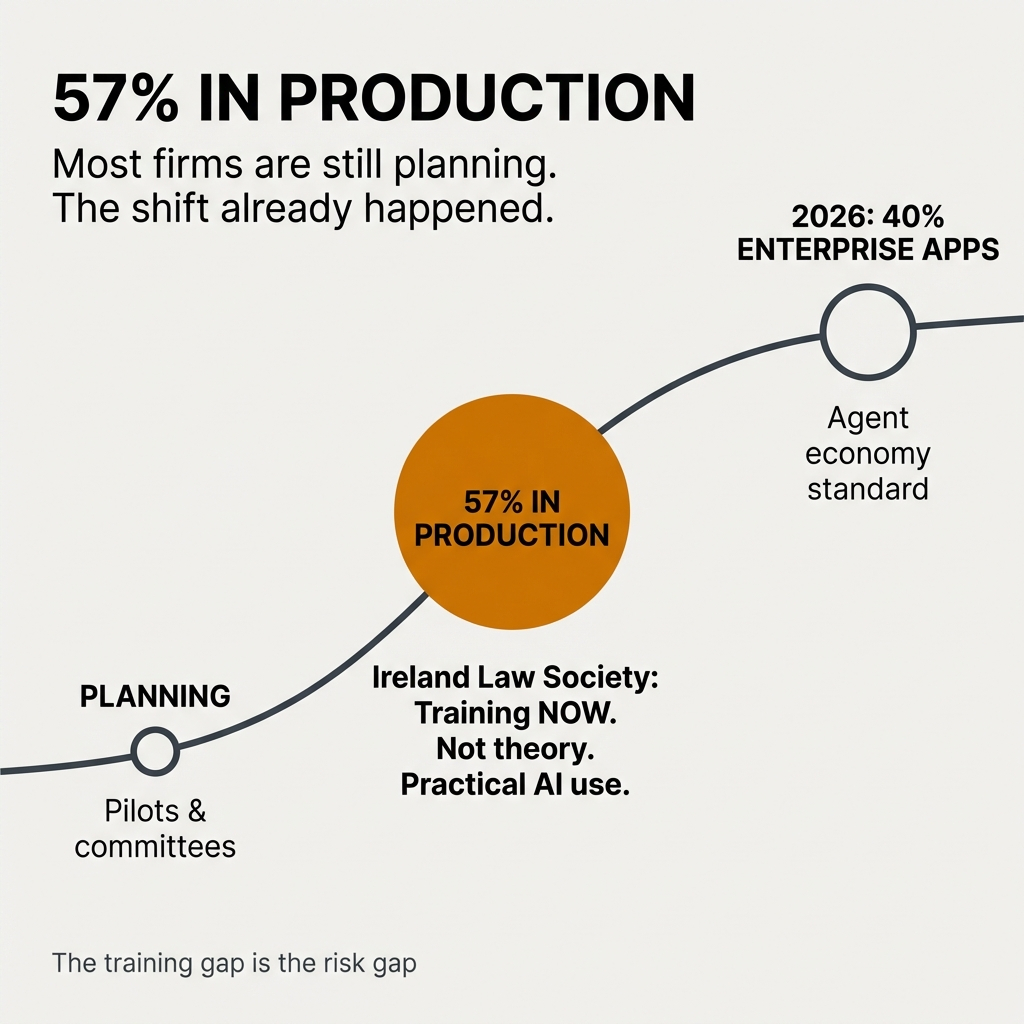

Here's what changed while everyone was still talking about pilots and proofs of concept.

Fifty-seven percent of organizations now have AI agents running in production environments. Another 30% are actively developing agents with concrete deployment plans.

That's not experimentation anymore. That's execution.

Gartner projects that 40% of enterprise applications will integrate task-specific AI agents by 2026. That represents an 800% increase from today's baseline of less than 5%.

The shift from dashboard to agent changes everything about how work gets done. Dashboards show you information. Agents execute tasks. They run scripts, manage files, automate workflows. Your dashboard becomes an oversight tool instead of an action center.

Tools like Clawdbot exemplify this transition. They disassemble jobs into discrete tasks and automate the repeatable ones. This doesn't necessarily eliminate roles. In many cases, it intensifies day-to-day work by removing the buffer tasks and leaving only the high-judgment activities.

Why Ireland's Law Society Gets It

The Law Society Skillnet training program focuses on three things that matter right now.

Practical application. The workshops teach specific use cases you can implement tomorrow. Not someday. Tomorrow.

Professional responsibility. Legal professionals operate under strict ethical obligations. The training emphasizes how to use AI without compromising those duties.

Data practices. Robust data handling isn't optional in legal work. The sessions cover how to maintain data integrity when AI enters your workflow.

Donna O'Leary, a dual-qualified solicitor specializing in AI implementation for law firms, leads these workshops. Each session delivers three CPD hours. That credential requirement signals something important: this isn't extra. It's core.

The EU AI Act adds urgency to this training push. Organizations face potential fines up to €35 million or 7% of turnover for non-compliance. The Act mandates audits, data protection impact assessments, and governance frameworks.

You can't govern what you don't understand. The Law Society recognized this and built training infrastructure before the crisis hits.

The Hidden Risk in Positive Attitudes

Here's something that surprised me in the research.

Users with positive attitudes toward AI can be more susceptible to biased or misleading guidance from AI systems. Not less. More.

The enthusiasm creates blind spots. When you believe the tool is helping you, you question its outputs less frequently. You accept suggestions more readily. You override your own judgment more often.

This creates a training imperative that goes beyond technical skills. Users need to develop critical evaluation practices specifically for AI-generated content.

The numbers back this up. Seventy-two percent of organizations worry about bias in generative AI, but only 5% feel confident in their ability to spot that bias. Even worse, 71% of workers say their organizations aren't doing enough to combat AI bias.

The gap between concern and capability is massive.

Meanwhile, 75% of enterprise workers report that AI helped them complete tasks they couldn't do before. That capability expansion is real. It's also dangerous if you don't pair it with rigorous testing and continuous training.

The Enterprise Decision Stack

I've talked to enough technology leaders to recognize a pattern in how they describe their AI infrastructure choices.

They use words like "irreversible" and "foundational."

That language reflects reality. The decisions you make now about data architecture, governance frameworks, and integration patterns will constrain your options for years.

Two-thirds of leaders cite agentic system complexity as their top barrier to deployment. This has held steady for two consecutive quarters. The complexity isn't decreasing. Organizations are just accepting it as the baseline condition.

Seventy-five percent prioritize security, compliance, and auditability as their most critical requirements for agent deployment. Notice what's missing from that list: speed, innovation, competitive advantage. Those concerns rank lower than the governance requirements.

The investment signals commitment. Sixty-seven percent of business leaders say they'll maintain AI spending even if a recession hits in the next 12 months. They project deploying $124 million over the coming year.

That's not pilot money. That's production infrastructure money.

The Scaling Problem

Eighty-eight percent of enterprises use AI in some capacity. Only 33% successfully scale their programs.

The failure points are predictable: multi-user authorization complexity, integration challenges, inadequate monitoring infrastructure. Organizations that succeed manage six dimensions simultaneously: strategy, talent, operating model, technology, data, and adoption mechanisms.

You can't outsource this coordination to a vendor. It requires internal strategic capacity.

For organizations getting it right, 66% report measurable value through increased productivity. The competitive pressure is real: 46% of respondents worry their company is falling behind competitors in agent adoption.

What ROI Actually Looks Like

I'm skeptical of most ROI claims in technology. They tend to measure inputs instead of outcomes or compare ideal states to worst-case baselines.

But some results are hard to dismiss.

A major manufacturer reduced production optimization work from six weeks to one day using AI agents. A global investment company deployed agents across their sales process and freed up 90% more time for salespeople to spend with customers.

In healthcare, AtlantiCare tested an AI-powered clinical assistant with 50 providers. Eighty percent adopted it. Those using the tool experienced a 42% reduction in documentation time, saving approximately 66 minutes per day.

That's not marginal improvement. That's structural change in how work gets done.

The marketing sector shows similar patterns. Eighty-eight percent of marketers now use AI tools daily. The AI marketing industry has grown to $47.32 billion. Seventy-three percent of marketing teams use generative AI in 2025, up from 37% in 2023.

Ninety-three percent of CMOs report clear ROI from generative AI. Eighty-three percent of marketing teams say the same.

The 2026 Inflection Point

Every technology analyst I respect points to 2026 as the year organizations move from deployment to discovery.

You'll put AI into production. Then you'll discover what breaks at scale.

The Law Society Skillnet workshops represent one approach to preparing for that discovery phase. Train people before the crisis. Build evaluation skills before the high-stakes decisions arrive. Establish governance frameworks before the regulatory pressure intensifies.

This isn't about getting ahead of the curve. The curve already passed. This is about not falling further behind.

The organizations that succeed will be the ones that treat AI deployment as a strategic capability requiring continuous investment in training, governance, and infrastructure. Not a technology purchase. A capability build.

The marketing prediction that AI will write 70% of all marketing copy by 2026 isn't a distant future scenario. It's 12 months away. The legal sector faces similar transformation timelines.

Ireland's Law Society chose to act instead of plan. They're running workshops, building competency, establishing standards. That's what preparation looks like when you take the timeline seriously.

What This Means for You

If you work in a professional services firm, you're watching this transformation happen in real time.

The question isn't whether AI will change your work. It's whether you'll develop the skills to use it responsibly before you're forced to use it reactively.

Training programs like the Law Society Skillnet workshops offer a structured path. But the underlying principle applies everywhere: build evaluation capacity before you need it.

Learn to spot bias in AI outputs. Understand the data practices that maintain integrity. Recognize the professional responsibility boundaries that AI can't cross.

The agent economy is here. Your dashboard is becoming an oversight tool. The tasks you used to do manually are getting automated. What remains will be the work that requires judgment, context, and accountability.

That's the work you need to get better at. Right now.

Comments

Post a Comment