How I Actually Evaluate Marketing Systems (The Stuff Nobody Talks About)

I've evaluated dozens of marketing systems over the past decade. The tools change. The platforms evolve. But the problems stay remarkably consistent.

Most evaluations focus on the wrong things. They audit tools. They count licenses. They review dashboards. Then they wonder why nothing improves.

I learned to look somewhere else entirely.

I Start Where the Money Disappears

The first thing I do is follow the waste.

Not the obvious waste. Everyone can spot an unused tool or a campaign that flopped. I'm looking for the invisible leaks. The places where marketers estimate they waste 21% of their budget without even realizing it.

Here's what that looks like in practice:

I map every handoff point. Lead gen to sales. Content to distribution. Data input to reporting output. Every place where information moves from one system to another.

These transitions are where things break.

A lead comes in with incomplete data. Someone manually enriches it. That takes 15 minutes. Multiply that by 200 leads per week. You've just found 50 hours of monthly waste that doesn't show up in any report.

Or a campaign runs in three different tools. Each tool tracks conversions differently. The numbers don't match. So someone spends hours every week reconciling data instead of analyzing it.

I document every manual workaround. Every spreadsheet someone maintains "just to keep things straight." Every Slack message that says "can you check if this number is right?"

These workarounds are symptoms. They tell me the system isn't doing its job.

The Tool Audit That Actually Matters

Most companies use only 33% of their marketing technology capabilities. That stat gets quoted a lot. But nobody asks the right follow-up question.

Why?

I've found three reasons that show up repeatedly:

The tool was bought for a feature nobody actually needed. Someone saw a demo. It looked impressive. But the daily work doesn't require that capability. The team defaults to the 3-4 features that match their actual workflow.

The integration never happened. The tool works great in isolation. But connecting it to the existing stack required custom development. That got deprioritized. Now it's an island. People avoid it because it means double entry.

Nobody was trained properly. The team knows enough to do the basics. The advanced features that justify the cost? Those require expertise nobody has time to develop.

So I don't just audit what tools exist. I audit what tools do the actual work.

I spend a day watching people work. Not in a meeting. Not in a demo. I watch them execute their daily tasks. Which tabs do they have open? Which tools do they switch between? Where do they pause and sigh?

That last one matters more than you'd think.

The sigh usually means "this should be easier." And they're right.

The Questions That Reveal System Health

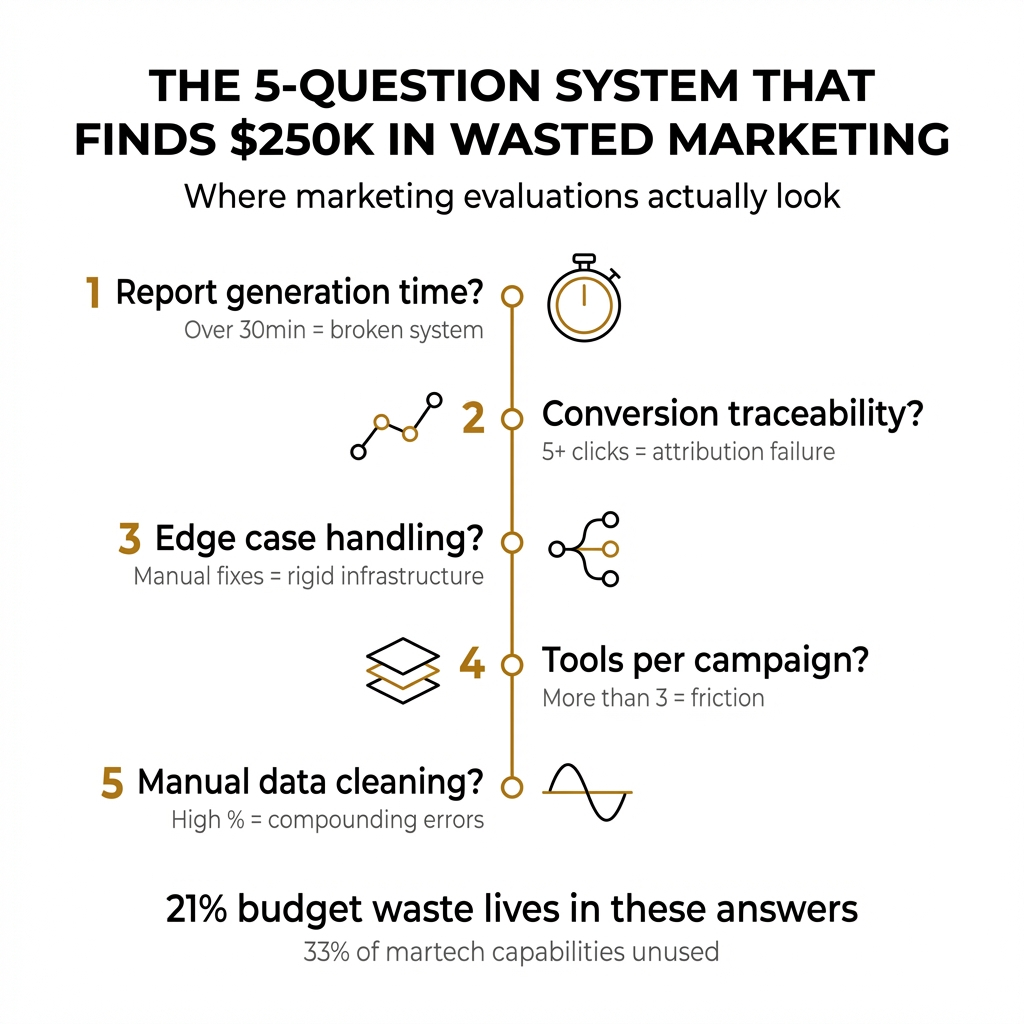

I ask the same five questions in every evaluation. The answers tell me everything I need to know.

How long does it take to generate an accurate campaign report?

If the answer is more than 30 minutes, something is broken. Either the data isn't centralized, the attribution model is unclear, or multiple sources contradict each other.

Good systems produce accurate reports in minutes. Great systems produce them automatically.

Can you trace a conversion back to its source in under five clicks?

This tests attribution infrastructure. If someone has to export data, cross-reference spreadsheets, or "just know" where leads came from, the system isn't tracking properly.

I've seen teams spend hours debating which channel deserves credit for a conversion. That's not a strategy problem. That's a systems problem.

What happens when a lead doesn't fit the standard workflow?

Every system has edge cases. The question is whether the system handles them or breaks down.

If the answer involves manual intervention, special spreadsheets, or "we have a process for that," the system is too rigid. You're designing around the tools instead of the other way around.

How many tools does someone need to access to complete a single campaign?

I've seen campaigns that require seven different logins. Email platform. Analytics. Ad manager. CRM. Project management. Asset storage. Reporting dashboard.

Each transition is friction. Each login is a chance to lose focus. Each tool switch is a place where mistakes happen.

The number should be three or fewer for most campaigns.

What percentage of your data requires manual cleaning before it's usable?

This question makes people uncomfortable. Because the answer is usually "more than we'd like to admit."

Data quality issues compound. A field that's 90% accurate sounds good. But if you have ten fields, and each is 90% accurate, only 35% of your records are completely correct.

I look for where data enters the system. Forms. Integrations. Manual imports. Then I trace how it moves. Each step is an opportunity for degradation.

The Things I Look For That Others Miss

Some problems hide in plain sight.

Redundant data entry. The same information gets entered in multiple places. Contact details in the CRM and the email platform and the webinar tool. Each entry is a chance for inconsistency.

I map data flow. Where does information originate? Where does it need to exist? What's the single source of truth?

Often, there isn't one.

Invisible dependencies. One system relies on another, but nobody documented it. A report pulls from a database that gets updated by a script that depends on a third-party integration.

Then the integration breaks. The script fails silently. The report shows outdated data. Nobody notices for two weeks.

I ask people to explain their systems out loud. When they say "and then it just works," that's where I dig deeper.

Metrics that don't connect to decisions. Dashboards full of numbers that nobody acts on. KPIs that look impressive but don't change behavior.

For every metric, I ask: "What decision does this inform?" If the answer is vague or nonexistent, the metric is vanity.

Companies with defined marketing systems see up to 30% higher marketing ROI. But that only happens when the system connects measurement to action.

Permission bottlenecks. Critical functions that only one person can perform. Campaign approvals that require three signatures. Access controls so strict that people work around them.

I track how long it takes to execute common tasks. Not the work itself. The waiting. The approvals. The "let me check with someone."

Time spent waiting is time the system is failing.

What Good Actually Looks Like

I've seen systems that work. They share common traits.

Data flows in one direction. Information enters once. It propagates automatically. Updates happen in real time. There's no reconciliation because there's nothing to reconcile.

Reports answer questions, not just display numbers. Instead of "here are the click-through rates," the system says "this campaign is underperforming because the audience targeting is too broad."

Failures are visible immediately. When something breaks, someone knows within minutes. Not because they're monitoring constantly, but because the system alerts them.

The team spends time on strategy, not mechanics. They're not fighting with tools. They're not cleaning data. They're not reconciling reports. They're making decisions.

That's the real test. Where does the team spend its time?

The Evaluation Framework I Actually Use

I work in a specific order. It's not random.

First, I map the current state. Not what people think happens. What actually happens. I follow a lead from first touch to closed deal. I track a campaign from concept to report. I document every tool, every handoff, every decision point.

This takes longer than people expect. The documented process and the actual process rarely match.

Second, I identify waste. Manual work that could be automated. Redundant steps. Data quality issues. Integration gaps. Permission bottlenecks. Every place where time or money disappears without adding value.

Third, I assess tool utilization. What's being used? What's being ignored? What's being worked around? Which tools are central to daily operations and which are expensive shelf-ware?

Fourth, I evaluate data integrity. Where does data come from? How does it move? Where does it get transformed? What's the error rate? How long does it take to trust a number?

Fifth, I test measurement validity. Do the metrics connect to business outcomes? Can people explain why they track what they track? Do reports inform decisions or just confirm assumptions?

Finally, I map the gaps. What's missing? What's broken? What's working but fragile? What's technically functional but practically useless?

The output isn't a list of recommendations. It's a prioritized roadmap. Fix this first because it's blocking everything else. Address this second because it's creating the most waste. Defer this because it's not actually a problem.

Why Most Evaluations Fail

I've seen plenty of evaluations that went nowhere.

They audit tools instead of workflows. They count features instead of measuring outcomes. They produce reports that sit in shared drives and never inform action.

The problem isn't the analysis. It's the approach.

Evaluating a marketing system isn't like auditing software. You're not checking boxes. You're understanding how work actually gets done. How decisions actually get made. Where things actually break down.

That requires watching people work. Asking uncomfortable questions. Challenging assumptions about what's "just how we do things."

It requires acknowledging that the documented process is fiction. The real process is what happens when someone needs to hit a deadline.

And it requires accepting that the tools aren't the problem. The tools are symptoms. The problem is usually the system underneath them.

When your system doesn't reflect how work actually happens, no tool will fix it. When your metrics don't connect to decisions, no dashboard will help. When your data quality is poor, no AI will save you.

I've learned to start with the fundamentals. Map the work. Find the waste. Fix the foundations.

The tools become obvious after that.

What I'd Do Differently

Early in my career, I optimized tools. I'd find inefficiencies and recommend better software. Sometimes it worked. Often it didn't.

I've learned to optimize systems instead.

That means understanding the work before evaluating the tools. It means talking to the people doing the daily execution, not just the people who bought the software.

It means asking "why does this take so long?" instead of "which tool should we use?"

The best evaluations I've done started with observation, not analysis. I watched how teams actually worked. I noted every friction point. Every workaround. Every moment of confusion.

Then I mapped that against the intended design.

The gaps between intention and reality tell you everything you need to know.

Your marketing system should make work easier, not harder. It should produce clarity, not confusion. It should enable decisions, not just document activity.

If it's not doing that, the evaluation isn't about finding better tools. It's about understanding why the current system fails to serve the people using it.

That's where I start. And that's usually where I find the answer.

Comments

Post a Comment